Varying the size of the style input image is probably one of the most fun and interesting parameters to vary. It's also one of the most annoying for creating consistent results and it highlights some of the limitations of current convolutional network designs - they don't have inherent control over scale.

Achieving any kind of consistency requires some careful planning and coordination by resizing the the input and output pixel dimensions to consistent ratios. The same style image scaled down will mean different feature kernels will be learned and applied to the content image.

Another non-obvious fact. If you have two content images of the same subject but one is taken from further away, the style transfer will look different in each transfer because the pixel area the subject occupies will be smaller in one image. Just something to be aware of.

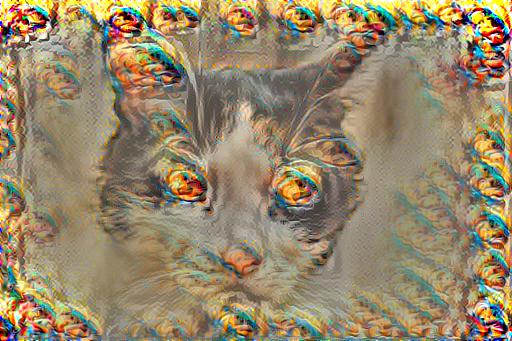

All of the images below are created at a content to style ratio of 1 to 10 at a learning rate of 10 and 1000 iterations. The first image shows the style being scaled down by a factor of 10 and what's interesting is that the source style image is effectively learned wholesale as a feature and appears stamped all over the output.

Style scale multiplier 0.1.

Style scale multiplier 0.1.

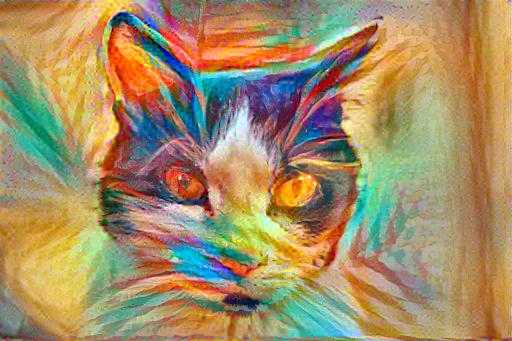

Next the style is only scaled by one half and now the detail frequencies between the style and the content actually overlap enough to not leave gaps. The output carries much of the original style and we can start to see how things work. The brush strokes around the whiskers and eyes are numerous and fairly thin.

Style scale multiplier 0.5.

Style scale multiplier 0.5.

Next is a transfer without any scaling... sort of. The scale factor is whatever happened to be the inherent pixel size ratio between the content features and the style features at the given input and output resolutions. The only thing that's special about this factor is that it takes maximal advantage of the style image's feature density. I suspect that in future neural network designs we'll be resizing the features not the inputs.

Style scale multiplier 1.

Style scale multiplier 1.

This next image is scaled to twice the size. Perhaps you prefer this image which is made up of broader brush strokes and not as much high frequency detail.

Style scale multiplier 2.

Style scale multiplier 2.

By the time we scale by 4 the features are so large that they effectively just color large patches. I don't know for sure what the learned feature kernels look like but I suspect there's no high frequency detail in them whatsoever.

Style scale multiplier 4.

Style scale multiplier 4.

Neural style transfer as understood today does a good job in some respects but also falls really short in other ways. Control over style scale should happen at the network feature level, not at the input level where detail is lost during the resize.

Primarily for fun: An animation showing styles scales from 1.5 to 2.5.

Primarily for fun: An animation showing styles scales from 1.5 to 2.5.

Other things to try are to solve for multiple input style images in a single transfer, perhaps clones of the original at different scales. Making the transfer object patch aware could also be interesting since style transfer doesn't respect any type of semantic structures but paints rather indiscriminately. I'd like to explore these things in a future set of articles but goodbye for now.