Neural networks are special because they can learn [encode] complex things [as relationships]. They stand out because they can assimilate multidimensional information. The output space in the case of neural style transfer is also multidimensional and non-linear. Let's look at the effect of hyper-parameters on the output space.

The first one is easy, after all it's the basis of the paper, but it's worth revisiting to set up the context for the other parameters. Let's start by finding a style image... with style:

Salt Kettle, Bermuda - Winslow Homer, 1899. Image thanks to the National Gallery of Art.

Salt Kettle, Bermuda - Winslow Homer, 1899. Image thanks to the National Gallery of Art.

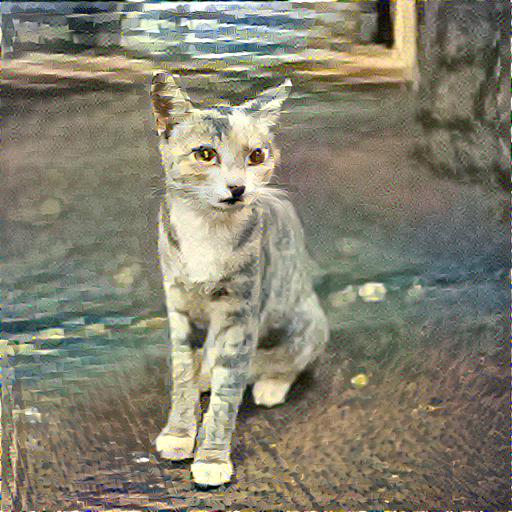

And a content image to stylize:

Stray cat, Hawaii - Mugur Marculescu 2015.

Stray cat, Hawaii - Mugur Marculescu 2015.

Let's generate a sequence ranging from pure style to a large emphasis on content. This is easy to show because we're only changing a single parameter, the content weight. All images are generated at a style weight of 100. Since what matters is the style to content ratio it's OK to vary only content.

The first image below is very interesting. The network optimizer is essentially not solving for content at all. What's left is pure style. Basically nonsensical water color features slapped together.

Even a tiny drop of content weight helps. In the second image at a 200x balance in the favor of style the cat starts to emerge and is easily recognizable.

Content 0 Style 100

Content 0 Style 100

Content 0.5 Style 100

Content 0.5 Style 100

Content 1 Style 100

Content 1 Style 100

Content 2 Style 100

Content 2 Style 100

Content 5 Style 100

Content 5 Style 100

Content 10 Style 100

Content 10 Style 100

Content 20 Style 100

Content 20 Style 100

Content 40 Style 100

Content 40 Style 100

Content 100 Style 100

Content 100 Style 100

Content 1000 Style 100

Content 1000 Style 100

This last image is also quite interesting. The content now outweighs the style at 10x and the image looks almost photographic in quality. The overall tone and features are very similar to the original content image. The content has been "reconstructed" by the layers of the feature filter convolutions but it is not perfect.

It has accumulated errors along the way [either by feature omission or addition], some I suspect because of the mismatch of visual features extracted from the style image and the ones that would have been extracted from an identity image of the content. The other source of errors could be due to the step size used to train the VGG19 network in the first place.

For a little bit of fun. Here's an ping-pong animation of the content weight being varied from 0 to 1000 and back.

For a little bit of fun. Here's an ping-pong animation of the content weight being varied from 0 to 1000 and back.